Style control - access keys in brackets

4.5 Variance

Expectation is a weighted average, and consequently is a measure of the location of the pmf. The spread, or dispersion, of a random variable is usually measured by the variance: the expected squared deviation about the expectation.

The variance of the random variable , , is defined as The standard deviation of , , is defined to be the square root of the variance.

The variance is the expectation of the function of the random variable , where is a number.

Aside: To get a feel for standard deviations experience (and some nice theory in later courses!) suggests that for many random variables approximately of the probability mass falls within standard deviations of the mean of the random variable.

Exercise 4.22.

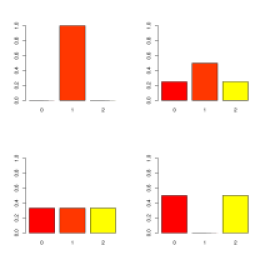

Suppose that four random variables , , and on have pmfs

respectively. These are plotted in the graphs:

Note for each pmf the sum of the probs is . The expectations are the same so that

Find the variances.

Solution.

The different variances are

We see that

.

This agrees with intuition of dispersions from barplots.

This formulation of the variance is inconvenient for calculation, so alternative forms have been derived which simplify evaluation. Writing as a constant, we have

Exercise 4.23.

Find the variance of a random digit uniformly distributed on the integers .

Solution.

From above , and

So

As with linearity for expectation there is an important result for the variance of linear functions of a random variable . Suppose and are constants

This result shows the important properties of variance:

Exercise 4.24.

For a random variable , and . Find

-

i.

-

ii.

-

iii.

-

iv.

Solution.

-

i.

.

-

ii.

.

-

iii.

.

-

iv.

.

In summary